San Francisco-based start-up Guide Labs has open-sourced an 8 billion parameter large language model (LLM) designed with inherent interpretability. The model, named Steerling-8B, features a novel architecture that allows every token it generates to be traced back to its origins within the training data, a significant step towards solving the "black box" problem in artificial intelligence.

The company was founded by CEO Julius Adebayo and chief science officer Aya Abdelsalam Ismail. Adebayo's PhD research at MIT, including a widely cited 2020 paper, challenged the reliability of existing methods for understanding deep learning models and ultimately led to this new approach to building LLMs.

Engineering Understanding from the Ground Up

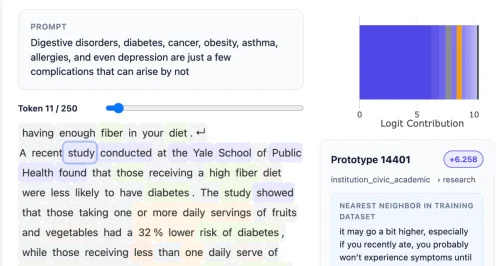

The core innovation is a "concept layer" inserted into the model that buckets data into traceable categories. "The kind of interpretability people do is…neuroscience on a model, and we flip that," Adebayo told TechCrunch. "What we do is actually engineer the model from the ground up so that you don’t need to do neuroscience."

This method requires more upfront data annotation, though the team used other AI models to assist in training Steerling-8B as their largest proof-of-concept to date. Guide Labs claims the model achieves approximately 90% of the capability of existing frontier models while using less training data.

Applications and Industry Need

Adebayo argues this interpretable architecture addresses a critical need across multiple sectors. For consumer-facing LLMs, it could enable builders to block the use of copyrighted materials or better control outputs concerning violence or drug abuse. In regulated industries like finance, it could ensure models evaluate loan applicants based on financial records without considering protected characteristics like race.

The technology also holds promise for scientific work, such as protein folding, where scientists require insight into why a model arrives at a successful combination. "This model demonstrates is that training interpretable models is no longer a sort of science; it’s now an engineering problem," Adebayo stated.

Future Development and Funding

Guide Labs, which emerged from the Y Combinator programme and secured a $9 million seed round from Initialized Capital in November 2024, plans to build a larger model. The next steps include offering API and agentic access to users. The company maintains that its approach does not eliminate the emergent behaviours that make LLMs powerful, noting the model still discovers its own "concepts," such as quantum computing.

"Democratising inherent interpretability is actually going to be a long term good thing for our our within the human race," Adebayo said. "As we’re going after these models that are going to be super intelligent, you don’t want something to be making decisions on your behalf that’s sort of mysterious to you."