Imagine an AI so advanced it doesn't just create pictures—it creates the *context* for them. A fake screenshot of a news article on your own computer. A photo of a magazine that doesn't exist. This is no longer science fiction; it's the unsettling new reality launched by OpenAI this week.

In a move that pushes the boundaries of both capability and controversy, the company unveiled ChatGPT Images 2.0. This isn't just an upgrade. OpenAI claims it has "thinking capabilities," including the power to crawl the internet and, chillingly, to "double check" its own work. The question isn't just what it can make, but how its creations will warp our perception of truth.

The "Proof" That's Actually a Perfect Fake

To tease its release, OpenAI itself posted an AI-generated image on X designed to look like a perfectly mundane screenshot—a Google Chrome tab open on a Mac, discussing the very livestream that announced it. "This is not a screenshot," the caption read, in a meta-statement that perfectly captures the coming confusion. "The team really cooked on this one," CEO Sam Altman remarked during the presentation.

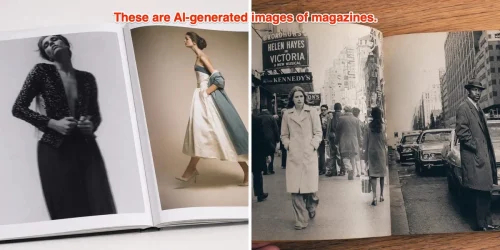

But the demonstration didn't stop at desktop fakery. The model also produced samples of what appeared to be a physical magazine and multiple pages of authentic-looking manga, showcasing its ability to generate not just images, but entire *formats* of media.

So, Is It Stealing Art? The Legal Minefield Just Got Deeper

With every leap in AI image generation, the spectre of copyright infringement looms larger. OpenAI was quick to state it does not intend to copy specific artwork, arguing images are generated from "patterns it has learned." A spokesperson added it prevents copying styles of individual living artists, but allows recreating broader studio styles.

Legal experts are watching closely. "If the output is substantially similar to something that the model was trained on or crawled, then there starts to be a copyright concern," Mitch Stoltz, IP litigation director for the Electronic Frontier Foundation, told Business Insider. "But if it's similar on the level of an idea, or just something that occurs in the world, or even a style or a vibe — generally speaking, that's not enough."

This nuanced distinction is critical, yet it does little to calm the storm. OpenAI is already battling at least a dozen ongoing copyright lawsuits from entities like The New York Times and author George R.R. Martin.

Your Reality is About to Get a Lot More Complicated

The core issue, as Stoltz points out, isn't necessarily new in law, but it's accelerating at a breakneck pace in society. "The issues for society... are greater because it's easier, faster, and more available."

This model's ability to generate content in multiple languages and its web-crawling "thinking" function mean the flood of hyper-realistic, context-aware fakes is imminent. It promises creative tools—like an 80s glam version of Warren Buffett—but it also dismantles another layer of trust in what we see online.

The launch of Images 2.0 isn't just a product update; it's a cultural inflection point. The technology to seamlessly blend the artificial with the real is now here, operating at scale. The burden of deciphering truth from an incredibly convincing fiction is shifting, decisively and permanently, onto you.