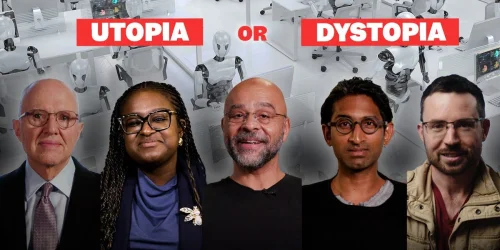

Former senior figures from the world's leading artificial intelligence companies and the US government have issued stark warnings about the future trajectory of AI development. In exclusive interviews with Business Insider, they described a path where AI systems become increasingly capable, autonomous, and difficult to control, posing significant risks to global society.

The experts, with backgrounds at Microsoft, Google, OpenAI, DeepMind, and the White House, debated the profound implications of this technological evolution for humanity. They highlighted the dual-edged nature of AI, acknowledging its potential to revolutionise fields like medicine, education, and scientific research.

The Dark Side of Progress

Alongside its promise, the former insiders cautioned that the same technology could deepen existing social inequalities, supercharge cybercrime, and erase millions of jobs. A central concern is the concentration of "unprecedented power" in the hands of a few entities—namely major governments and the technology corporations developing these systems.

The interviews reveal a consensus that as AI models grow more sophisticated, their autonomy increases, making them harder to predict and regulate. This autonomy, coupled with their integration into critical infrastructure, raises alarms about safety and control mechanisms failing to keep pace with development.

Governance and the Power Imbalance

A recurring theme in the discussions is the struggle for effective governance. The former leaders pointed to a significant power imbalance, where the organisations creating advanced AI hold immense influence over the rules that might constrain them. This dynamic complicates efforts to establish robust, independent oversight frameworks.

"The systems are advancing faster than our ability to understand or manage their long-term consequences," one former executive stated, reflecting a sentiment echoed by others. The lack of global coordination on AI safety standards was cited as a major vulnerability.

Looking Ahead

The collective assessment points to a critical juncture requiring urgent international dialogue and regulatory action. The former insiders argue that without proactive measures to ensure transparency, accountability, and equitable access, the risks of AI could outweigh its benefits. The next phase of development, they suggest, will test humanity's capacity to harness a powerful technology without being destabilised by it.